Disclaimer: The AI industry is new and continuously surprising me everyday, so the following will only cover a subset of the industry, but also the major players.

Every day, we see headlines of AI companies hitting new multi-billion dollar valuations. VCs are frothy to invest in the latest and greatest names no matter the cost, if only just to get a flashy logo on their portfolio. On one hand, you can’t deny that the momentum of AI is a once in a decade event. The pace at which the technology is improving and how organizations are scrambling to find ways to boost value internally is undeniable. On the other hand, we’ve just entered a high interest rate environment, where the strategy of “growth at all costs” has become “profits are king”. But if you look closer, things are reverting back and people are forgetting how to run a business. Could it be that “growth at all costs” is back? Or is this now the reality the business of AI? Let’s dive deep.

There are many types of businesses in AI, but let’s remember some basic fundamentals of running a business. As an investor you should always be looking at a few factors:

Does the company have a strategy of making back enough money to cover their expenses?

Are the revenues and growth justifying the valuation?

What’s the long term strategy for revenue and profits? Is this a sustainable strategy?

Again, this is 2023, not 2021, the age of high interest rates have made the cost of capital high. Or another way of looking at it, sitting on cash is actually a worthy investment if you’re getting 5% or more guaranteed.

Now let’s start transitioning to AI, one of the basic principles of succeeding in the business of AI and technology is scale. With scale the marginal cost of adding a customer or providing your service should be small. You cannot sustain a business where every customer simply adds more and more losses.

Now let’s cover a few strategies of companies that are commanding huge valuations and the types of businesses they offer:

Infrastructure

Let’s start with examining the Infrastructure layer. You need GPUs to train and run AI. Whatever hype you are hearing in the news, it’s going to require infrastructure. This points directly to Nvidia. At the moment no other chip maker comes close to their performance, and it may be several months or even years before we see this change.

There’s no denying the biggest winner right now is Nvidia. See stock chart here if you haven’t been following:

(NVDA is up over 200% year to date)

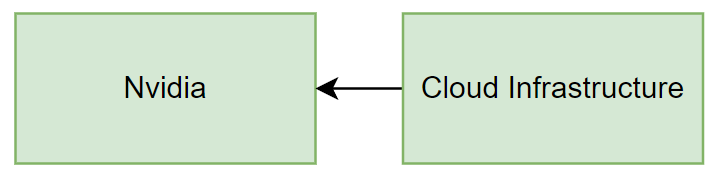

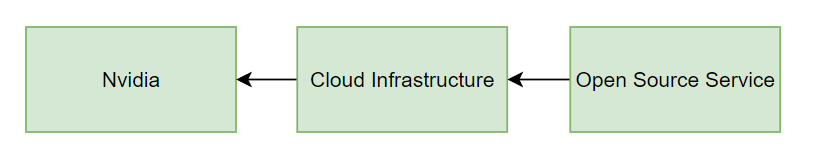

Any company that wants to train AI or run AI is going to benefit Nvidia. Whenever you even talk about Cloud Infrastructure like AWS, GCP, and Azure, they are throwing money at Nvidia first, then benefitting on any marginal costs on top by charging others to run their instances. So the biggest winner is the hardware manufacturer, then the cloud services who can charge money for using that hardware. Whatever AI company is created, they are going to either need to spend money to train models or spend money to run models. Businesses like CoreWeave made investments into this hardware to take advantage of the scarcity, and are now on track for $600M in annualized revenue. That’s some good return on investment.

(Green means both of these businesses can be highly profitable)

Next up, are the companies that train Foundational Models:

Foundational Model Companies

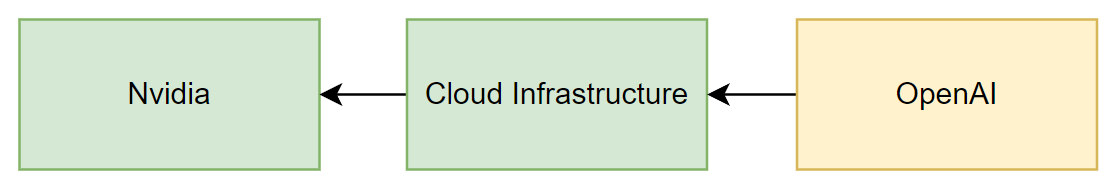

Foundational Model companies focus on building their proprietary LLM for others to use. This means they collect data, invest the capital (a lot of capital) to train a model, then either run services to utilize them for AI inferences, or license others to use the model so that they can make money off of it. The most obvious player here is OpenAI. Having invested an estimated ~$60M to train GPT4, they can now charge others for running services and making queries against this. However, it is unclear whether this strategy is highly profitable. How much money they spend running inferences with Microsoft is not exactly public, but it will be extremely high.

While there’s no denying their inference model is way ahead of others, it doesn’t mean others will not catch up. In fact, given the recent investments in H100s by companies like Inflection and other startups, training GPT4-like models may be common in less than a year. The other factor is whether they are making enough margin on top of the charges. While OpenAI clearly has the most volume, the cost of training and running these services is unknown, but it will likely go down over time. However, if you look at the hierarchy here, the money OpenAI is making just trickles back down to cloud infrastructure (Microsoft) who then invest in hardware (Nvidia) or compute (CoreWeave). The tricky thing is OpenAI has already decided to make their product free, and if their API charges too much they also cannot grab market share of secondary services using it for other applications. Growth at all costs.

OpenAI clearly has the user adoption and distribution. They can raise prices if they need to really turn up the revenue. Some of these other companies like Inflection and Anthropic also host proprietary models, yet lack the same user base. In fact, as more and more foundational model companies emerge, they will dilute the market and spread paying users thin. The problem is, these companies are commanding multi-billion dollar valuations! In order for these companies to make their high training and infrastructure costs, they will need to create brand new markets and use cases that will take in revenue. I think this needs to start from the Enterprise layer, because that’s where people will invest money first. It will be hard to start at the consumer level and ask them to pay for multiple Chat services, people are already sick of spending money for multiple streaming services after all.

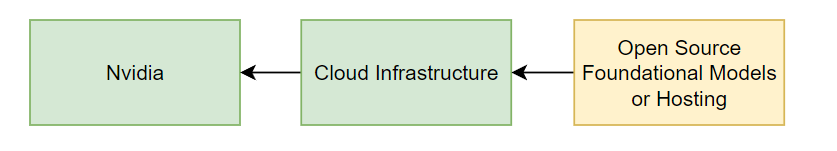

(Green means profit, Yellow unknown)

AI Service Companies

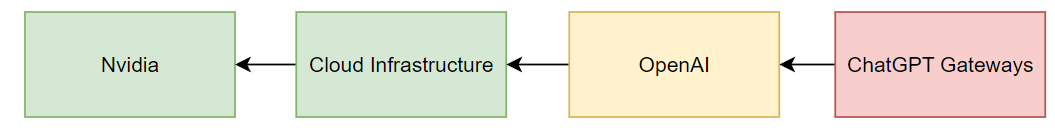

That brings us to the next layer. Secondary services who use ChatGPT or OpenAI’s API services are likely not making a lot of money. For one, some of these are simply just wrappers or free services that point back to OpenAI’s foundational model. Even if the service found a niche use case where they could generate a prompt that could do something extremely valuable, it would just mean there would be no moat for other companies to do the same. In fact, when Microsoft releases Copilot 365 for its Office Suite, I’d imagine it will kill off many of these wrapper services. You can already imagine ChatGPT plug-ins killing off a large amount of startups already. So what does this mean? If you are running a secondary services startup, you have no defensibility, and that means you will have to be extremely creative, have superior branding, or have large market share to stay ahead. You’ll have to make an incrementally large amount of money to cover the cost of money flowing back down the chain:

(Red means likely not sustainable)

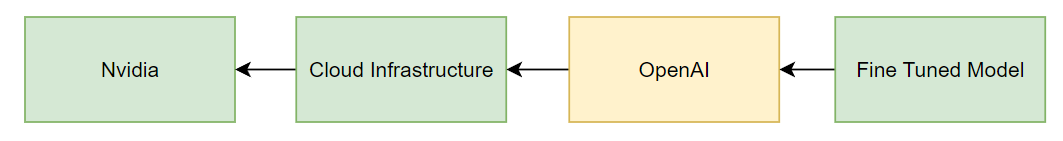

So how do you get defensibility? One strategy is to train fine tuned models from foundational models. This means there really is a barrier of effort that creates a more targeted quality of AI which is unmatched by general models. For example Med-PaLm 2 from Google has trained countless medical documents to create a model that can provide value in the medical field. Users would be willing to pay a premium for this, as they are already spending lots of money on Doctors and research. Another example is Harvey, which uses a fine tuned OpenAI model for legal use cases. This also makes sense, because the cost of legal has a high premium and can now be distributed to AI services as well. People would be willing to pay more for this type of service over ChatGPT. Fine tuning is a cheaper way than focusing on foundational models to get some defensibility, but the problem remains that the money still needs to flow back to OpenAI and go downstream. It means these require high premium services to make money back, which strategically could work in many situations.

Now let’s take a step back, other than transformers (LLMs), latent diffusion/GAN type content creation has also become popular in the last year. Generative AI for art and videos are spinning up services everywhere and also commanding billion dollar valuations.

The difference? Open source. The cost of hosting and running these models may have a fixed cost but very low variable cost since you are not paying a license or usage fee to someone like OpenAI for each additional customer. This means you can charge for the services on top without incurring too much pain as you scale higher (other than infrastructure). However, you lose defensibility because everyone will have the same access to this technology. This doesn’t mean it won’t work.

Two examples here include Midjourney and Adobe. Midjourney uses Stable Diffusion and charges users a subscription fee to generate images from arguably the best base model out there. It also helps that most of their outputs become super viral on social media. Adobe uses Stable Diffusion for its Firefly product, which may blow minds of existing users of photoshop but to people deep in AI, they can spot that all they did was implement all this open source technology into their UI. Brilliant. Not only that, both these companies trained their own foundational models too. In theory, they own the entire distribution of revenue with the only downstream being infrastructure. Again, this means Nvidia and cloud services win again, but there’s a bigger piece of pie for Midjourney and Adobe, assuming they can charge a high premium for their service. Midjourney was also able to keep their operating costs down by having a smaller team and not building a UI for their product (it’s all on Discord).

I think this arena can be highly profitable, the only problem is the addressable market is smaller since it’s centered around content creation. I am worried for companies like Stability, because they are spending lots of money as a foundational model company but not incurring the revenue from licensing the usage of them in high numbers. Over time, their strategy will really need to target very high value use cases or somehow expand its reach of paying customers. In the short term it is worrisome for them if they cannot capture that market today.

How about some other type of AI companies? We’ve heard of Hugging Face looking to raise at a $4B valuation, as well as other marketplaces that host AI models for users to consume. Even if you take a 100x multiple, that means investors are hoping that a $40M revenue will turn to $400M very soon for simply hosting different types of AI models, but given how many multi-billion dollar valuations there are, there will clearly be many losers and few winners. One could argue that Hugging Face does have a monopoly in its use case and the brand name awareness and user adoption, but the costs of infrastructure spending will clearly flow downstream to the infrastructure players we mentioned earlier.

(A big question mark as to whether open source infrastructure can make money in the long run)

I did want to pause and compare the various thoughts above with how at Codeium we are handling the business of AI. We are a foundational model company that also uses its model as a service. As mentioned above, this requires a lot of capital investment right? But not necessarily, for us to get this to work is to spend heavy engineering work on lowering the training and infrastructure costs. When we talked about revenue flowing downstream to hardware and Nvidia, the strategy here is to lower the cost of both of those as much as possible. By having a team that specializes in GPU optimization and AI/ML, it was possible to host extremely powerful language models on very efficient machines. This allowed us to give out a free product and replicate the infrastructure requirements for our Enterprise customers to host their own models on their private networks.

There are obviously some challenges here, once our Enterprise customers host our models, we cannot see them. Thus, we use the free product to iterate and experiment to make sure we are increasing value every time we roll out something new, then we can move these features to our security and privacy minded companies. For the time being, the strategy appears to be working.

To summarize, the business of AI is really composed of a few categories. You have the hardware and infrastructure side, the foundational models side, as well as the companies running these foundational models as services to earn revenue. The strategy here is very clear: lower your operating costs and increase the value of your services. Or just be Nvidia. In order to justify these valuations, the growth of revenue needs to 10x very soon, but with the number of companies coming out left and right promising this, the outcome is that there will only be a few winners and many losers. However, if you look at the thoughts above, hopefully AI companies can position themselves accordingly and we don’t get another Dotcom era bubble.

Until then, let’s keep building.